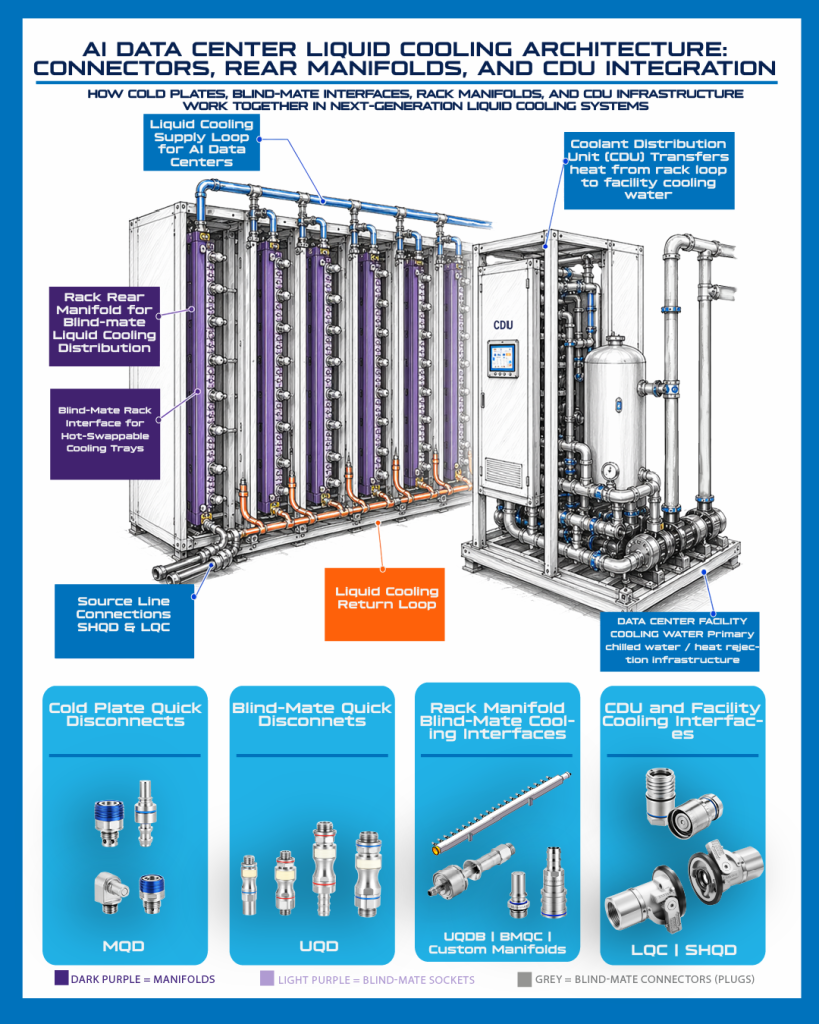

Artificial intelligence has fundamentally changed the physics inside the data center. Racks that once operated comfortably in the 5 to 20 kW range are now exceeding 30 kW, 60 kW, and even 100 kW per rack. At these densities, air cooling cannot remove heat efficiently enough to keep up with power demands. Liquid cooling is no longer an emerging technology; it is essential for the efficient operation of the modern day AI data center. Liquid cooling connectors are proving to be critical component in maintaining system efficiency and reliability.

Why Liquid Cooling Is Essential in AI Data Centers

Inside high density server cooling systems, connectors have become one of the most critical components in the thermal chain. In AI environments, uptime is directly tied to compute performance. A single thermal anomaly of as little as 2 degrees can shut down a tray. Modern GPU architectures rely on cold plates with microchannels measured in microns. Any flow restriction, contamination, misalignment, pressure instability, or unexpected pressure drop across the connector can trigger protective shutdowns. Connectors are not simple fittings. They are the reliability link within the liquid cooling chain.

Table of Contents

AI data center liquid cooling connectors are designed to ensure optimal thermal performance, which is vital in high-density environments.

Furthermore, AI data center liquid cooling connectors must also handle various thermal loads, adapting to the needs of modern GPUs.

The reliability of AI data center liquid cooling connectors directly impacts the overall performance of AI systems.

Incorporating liquid cooling connectors into AI data centers enhances system efficiency significantly.

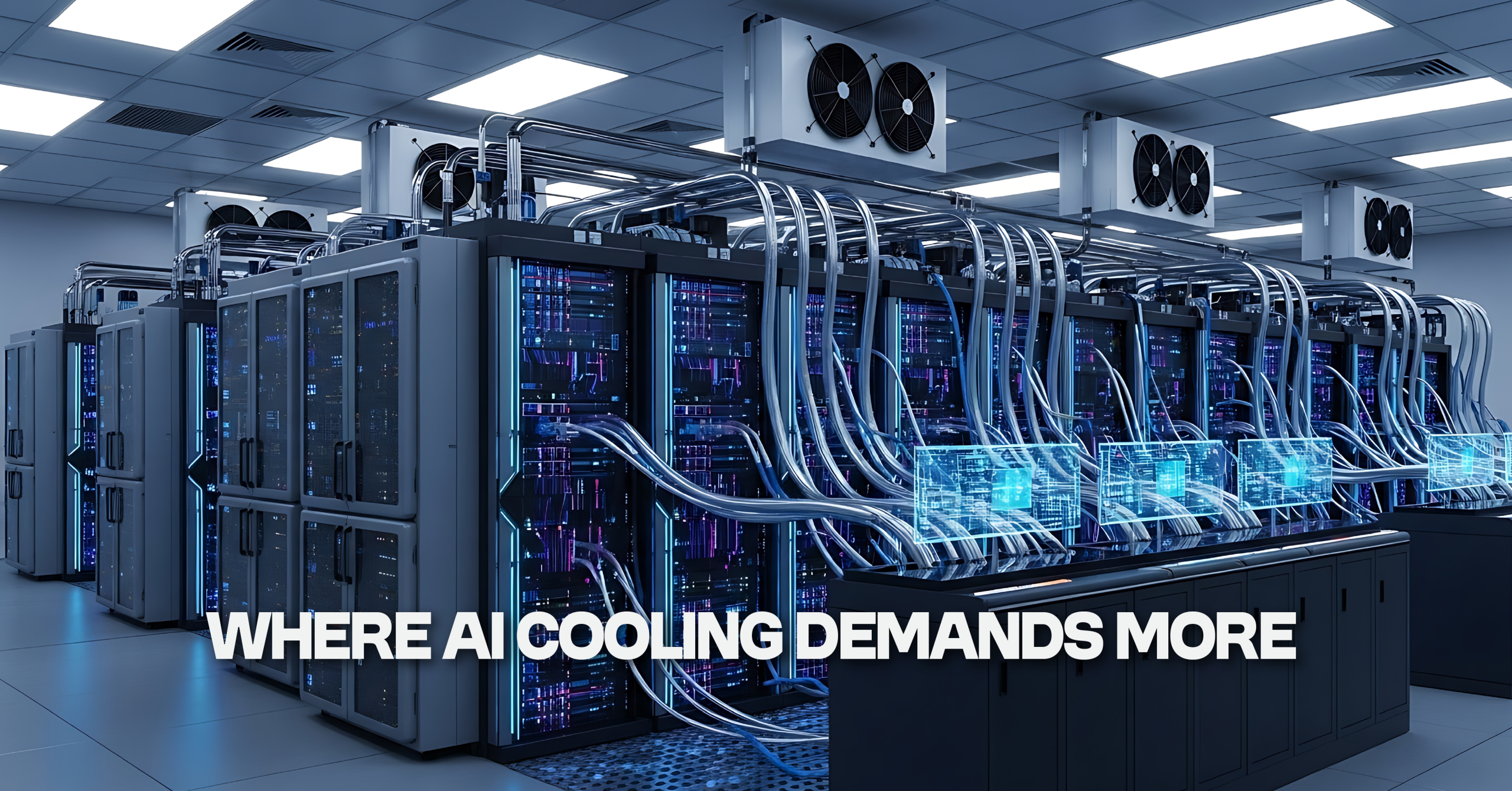

As AI deployments expand across hyperscale operators and next generation compute platforms, connector requirements are evolving just as quickly as GPU density. Today’s AI data center liquid cooling connectors must support secure engagement in extremely tight rack real estate, blind mate capability with ever increasing radial and angular misalignment tolerance, minimal fluid loss during service events, minimal pressure drop across the connector, higher pressure capability, and cleanliness suitable for microchannel cold plates. They must also remain compatible with evolving Open Compute Project standards and GPU driven specifications. Designs that were acceptable just a few years ago are often no longer sufficient. The Open Compute Project maintains specifications and related design materials for Open Rack v3 (ORV3) systems and supporting blind mate manifold implementations.

ORV3 and Blind Mate Requirements

Effective serviceability of AI data center liquid cooling connectors contributes to reduced downtime during maintenance.

This shift is especially visible in server designs built around OCP Rack and Power architectures, including ORV3 and the next evolution, ORV3 HPR (High Power Rack), as the industry pushes toward even higher rack power densities. These systems rely heavily on blind mate engagement between trays and rear manifolds. In these environments, radial misalignment tolerance determines installation reliability, angular misalignment tolerance protects against tray variance, and engagement stroke length affects rack depth and serviceability. Millimeters matter. Degrees matter. Pressure drop matters. Meeting the dimensional framework defined by the Open Compute Project is necessary, but it is not enough. Real world installation conditions demand engineering beyond baseline compliance, particularly as OCP blind mate quick disconnects continue to evolve. The OCP Open Rack V3 Blind Mate Manifold Specification is the strongest non-competitor reference for this section because it specifically addresses the ORv3 blind mate manifold interface and related requirements.

Serviceability in Dense Rack Environments

Integrating multiple AI data center liquid cooling connectors within a single system can greatly enhance performance and reliability.

The scale of AI infrastructure highlights the importance of robust AI data center liquid cooling connectors in modern setups.

Every AI data center liquid cooling connector must be designed keeping in mind the future demands of evolving hardware.

Serviceability adds another layer of complexity. Data center maintenance teams operate in dense, constrained environments where access is limited and time is critical. Connector latch geometry must balance ease of actuation with secure retention under pressure. It must allow technicians to operate confidently in tight spaces without increasing the risk of accidental disengagement. Small refinements in latch design and form factor may appear subtle, but across thousands of trays they directly impact service speed, operational stability, and long term reliability.

Cleanliness and Reliability

Investing in high-quality AI data center liquid cooling connectors is essential for maintaining system integrity.

For optimal performance, utilizing the latest AI data center liquid cooling connectors is highly recommended.

Cleanliness is equally critical. AI cooling loops are unforgiving. Cold plates use micro scale channels to dissipate heat efficiently, and even minor particulate contamination can compromise flow and force a thermal shutdown. That is why AI data center liquid cooling connectors must be assembled under controlled conditions, inspected at high magnification, packaged to preserve cleanliness, and fully traceable. Traceability supports faster root cause analysis and strengthens reliability programs in hyperscale environments. In AI data centers, contamination is not a quality issue. It is a performance risk.

Contaminants can severely affect AI data center liquid cooling connectors, emphasizing the need for strict cleanliness protocols. To support this, we exceed standard final-assembly requirements by utilizing ISO 9 cleanroom conditions.

To summarize, AI data center liquid cooling connectors are integral to the future efficiency of data centers.

Fluid Compatibility and Large-Format Interconnects

At the same time, fluid strategies are expanding. While many deployments still rely on glycol based coolants, the industry is actively exploring dielectric fluids, refrigerant assisted cooling systems, higher operating temperature ranges, and alternative chemistry blends. Connector seals and material selections must evolve alongside these changes. Liquid cooling also extends beyond the tray. Cooling distribution units, row to row systems, and facility level loops require large format quick disconnect solutions capable of managing substantial flow volumes with consistent integrity and minimal pressure loss. As high density server cooling systems scale globally, dependable large format interconnects become foundational to long term stability.

Moreover, the evolution of fluid compatibility is crucial for AI data center liquid cooling connectors in today’s dynamic environments.

System Integration Beyond the Connector

For many OEMs, the conversation no longer centers on a single connector. It centers on system integration. Pre configured hose assemblies improve repeatability and reduce installation complexity. Hybrid tubing options support variation in fluid chemistry while maintaining structural performance. Custom manifolds engineered for packaging, flow distribution, and sensor integration help optimize the overall cooling architecture. Where liquid flows, manifolds exist, and designing them correctly strengthens the entire cooling system.

Amphenol Industrial Operations’ custom manifold capabilities extend beyond connectors to AI liquid cooling racks, CDU units, and other fluid distribution systems. Wherever a fluid distribution mechanism is required, we can help engineer a tailored solution to support performance, packaging, and reliability.

The Scale of AI Infrastructure

The scale of this shift is significant. By 2035, it is estimated that 12 percent of total U.S. power consumption will be dedicated to data centers. AI infrastructure is expanding at unprecedented speed, and every rack, every tray, every manifold, and every connector is multiplied across tens of thousands of units. This is not a niche segment. It is the foundation of next generation compute.

Why OEMs Need More Than Commodity Hardware

For OEMs designing liquid cooled AI data center systems, connectors can no longer be treated as commodity hardware. They must support evolving blind mate tolerances, manage pressure drop and higher pressure capability, adapt to fluid chemistry changes, and maintain cleanliness discipline appropriate for hyperscale environments. They must scale as standards shift and architectures advance.

Explore Amphenol Industrial Operations’ Liquid Cooling Solutions

Amphenol Industrial Operations (AIO) brings decades of interconnect expertise to the evolving AI data center ecosystem, delivering engineered solutions that scale from tray to facility. Explore our full portfolio of liquid cooling systems designed for AI data centers, including quick disconnect couplings, large-format fluid interconnects, and integrated manifold solutions.

Explore our full Liquid Cooling Systems portfolio or download the Data Center Power & Cooling brochure to see how AIO supports rack-level and facility-level power and thermal management in modern AI data centers. For technical discussions around architecture, pressure requirements, blind mate design, or deployment strategy, connect with Albert Pinto at Amphenol Industrial Operations.

What are liquid cooling connectors for AI data centers?

Liquid cooling connectors for AI data centers are specialized fluid connection components that help move coolant safely and efficiently through high-density computing systems. They support thermal management, serviceability, and reliability in applications such as server trays, rack manifolds, cooling distribution units, and blind-mate rack interfaces.

Why do blind-mate liquid cooling connectors matter in AI data centers?

Blind-mate liquid cooling connectors matter because they help simplify installation and maintenance in dense rack environments where direct visibility may be limited. They are designed to support faster service and reliable sealed connections in demanding data center conditions.

How does OCP alignment influence liquid cooling connector design?

OCP alignment influences liquid cooling connector design by helping define dimensional and performance expectations for interoperability, installation, and cooling system integration. OCP-aligned connector solutions can support applications ranging from compact server trays to blind-mate rack systems.

Why are low fluid loss and dry-break sealing important in liquid cooling systems?

Low fluid loss and dry-break sealing are important because they help reduce coolant spillage during connection and disconnection. This supports cleaner maintenance, helps protect sensitive equipment, and contributes to more reliable liquid cooling system performance.

What is the role of a CDU in AI data center liquid cooling?

A coolant distribution unit, or CDU, transfers heat from the rack-level cooling loop to facility cooling water infrastructure. It helps regulate coolant flow, pressure, and temperature between IT equipment and the broader data center cooling system.

Where are quick disconnects used in a data center liquid cooling loop?

Quick disconnects can be used at cold plates, compact server trays, blind-mate rack interfaces, rear manifolds, CDU connections, and source-line interfaces. Each location has different requirements for flow, sealing, serviceability, and installation repeatability.

How do rear manifolds support liquid cooling in AI data centers?

Rear manifolds distribute coolant across rack-level cooling loops and help organize supply and return paths. In dense AI data center architectures, rear manifolds can support modular service access, blind-mate interfaces, and scalable coolant distribution.

Why does pressure drop matter when selecting liquid cooling connectors?

Pressure drop matters because excessive restriction can reduce available coolant flow and affect cooling balance across the system. Engineers should evaluate connector flow performance as part of the full coolant path, including cold plates, manifolds, tubing, CDU infrastructure, and source-line connections.

Stay up-to-date with all Amphenol Industrial News

Liquid Cooling Connectors for AI Data Centers: Beyond OCP Compliance

Commercial EV Connectors: Powerful ACT Expo 2026

Expanded Liquid Cooling Connectors for Thermal Management

Connect with Our Team

Amphenol Industrial's product lines consist of rectangular, standard miniature, fiber optic, EMI/EMP filter, and a variety of special application connectors.